AOS: An Agent Operating System

Apr 23, 2026

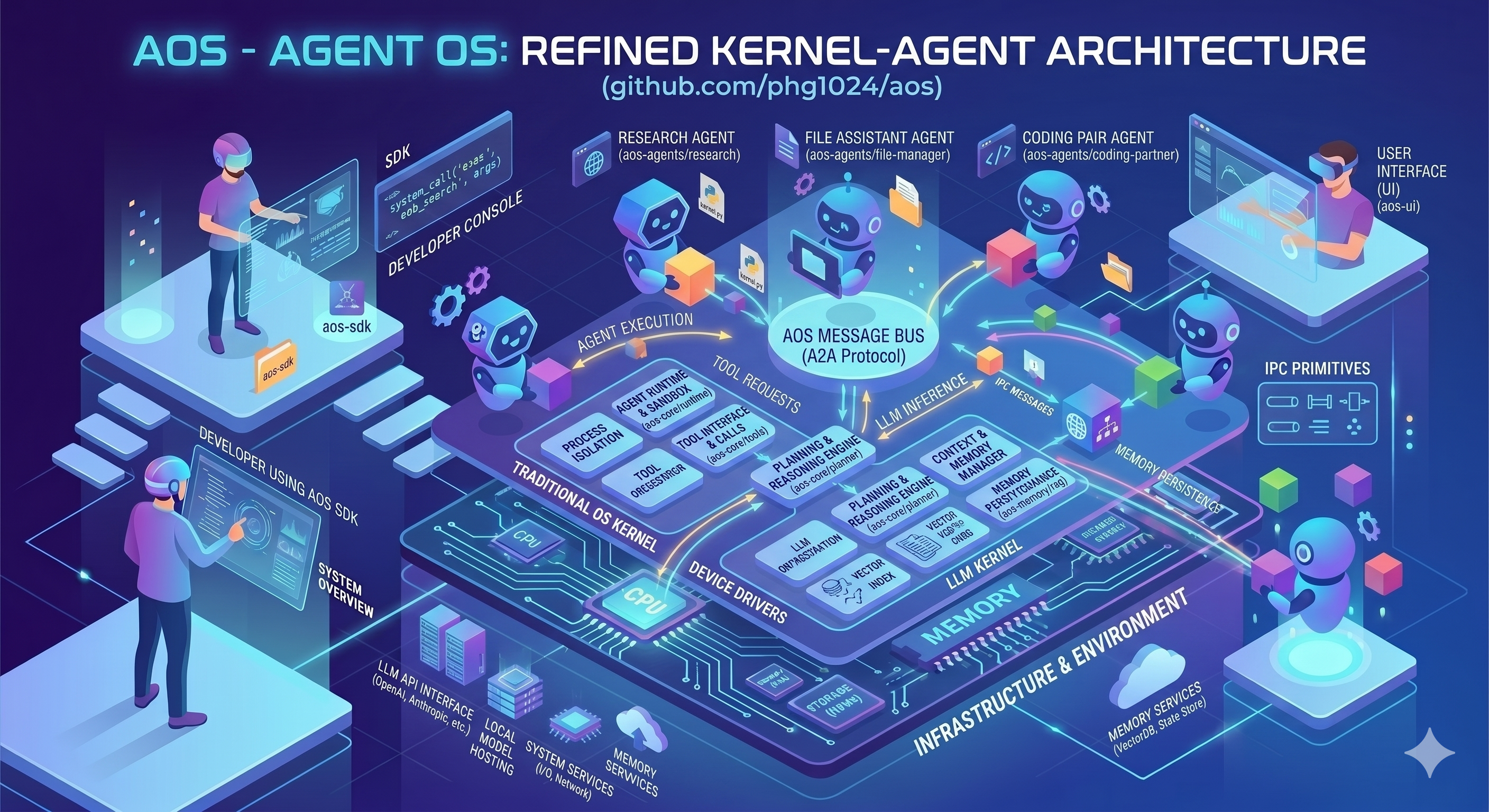

I've been working on AOS (Agent Operating System), a research prototype that treats multi-agent collaboration as a runtime systems problem, not a prompt choreography problem.

The Core Idea

Most agent systems model collaboration by giving multiple agents the same conversation history and asking them to "coordinate" through prompts. That approach creates hidden shared state, weak task ownership, and race conditions around files.

AOS takes a different approach:

Agents should share artifacts and protocols, not context windows.

The system looks like a tiny agent microkernel with five core subsystems:

- Kernel - Owns the global event loop, process table, capability table, scheduler, and audit log

- Agent Processes - Each agent is isolated with

pid, role/spec, inbox/outbox, mounted workspace views, capability set, and lifecycle state - IPC Layer - Mailboxes for point-to-point messages, channels for pub/sub events, shared artifacts, locks and leases for coordination

- Workspace VFS - Each agent gets a mounted view: private scratch space, shared project mounts, read-only reference mounts, per-task overlays

- Supervisor Tree - Parent agents spawn child agents, monitor them, aggregate results, and recover from failures

Guiding Principles

1. Make the Runtime Deterministic Where Possible

The kernel should be more like an operating system than an agent. Core semantics should be deterministic:

- process lifecycle

- message routing

- artifact creation

- lock acquisition

- lease expiration

- scheduling policy

- retry policy

- audit logging

Models are allowed to be probabilistic. The runtime should not be.

This is the main way to keep the system debuggable.

2. Keep Memory Outside the Model

Persistent memory should live outside the current model context:

- the event log

- task state

- artifact store

- checkpoints

- source control metadata

An agent should be able to die, restart, or hand off work without losing the system's actual state.

3. Prefer Structured Handoffs Over Long Shared Context

Long-running work should be partitioned. Handoffs should be artifact-based. Agents should receive a fresh working context whenever that improves reliability.

The right handoff artifact includes:

- what was attempted

- what changed

- what remains

- what failed

- what should happen next

4. Separate Planning, Doing, and Judging

Self-evaluation is weak. AOS separates:

- Planner - Defines scope, contracts, and dependencies

- Worker / Generator - Produces code, documents, analyses, or patches

- Evaluator / Verifier - Checks whether outputs satisfy contracts

- Reviewer - Applies critical judgment where subjective quality matters

These do not all need to be present in every flow. But the architecture makes them easy to compose.

5. Verification Must Be Externalized

A worker saying "I think this is done" is not verification.

Verification should come from something external to the producing agent:

- unit tests

- integration tests

- browser checks

- schema validation

- diff review

- contract-based evaluation

- separate evaluator agents

A task should become complete only when the required verification artifacts exist.

6. Treat the Inference Backend as a Driver

The AOS kernel should not be built around one provider contract. The model backend should be replaceable without changing core runtime semantics.

interface InferenceBackend {

name: string;

supportsTools(): boolean;

supportsStreaming(): boolean;

supportsJsonMode(): boolean;

generate(request: GenerateRequest): Promise<GenerateResult>;

stream?(request: GenerateRequest): AsyncIterable<GenerateEvent>;

}This lets us prototype quickly with local vllm while preserving long-term portability.

The Suggested Process API

type Pid = string;

type ArtifactId = string;

spawn(spec, mounts, caps, budget): Pid

send(pid, message): void

publish(topic, event): void

subscribe(pid, topic): void

await(target): ExitStatus

signal(pid, op): void

checkpoint(pid): ArtifactId

acquire(resource, pid): Lease

release(resource, pid): void

attachArtifact(pid, artifactId): voidTesting Principles

The system has two very different kinds of correctness:

- Runtime correctness - lifecycle transitions, IPC delivery, scheduling fairness, lock safety, artifact lineage, recovery behavior

- Model-mediated task quality - plan usefulness, output correctness, evaluator reliability, multi-agent collaboration quality under real inference

These should not be collapsed into one test suite. Runtime behavior should mostly be tested with deterministic fakes.

Multi-Agent Mini Projects

To verify real collaboration, I've built a suite of mini-projects that run against local vllm:

Project 1: Live Docs Merge

A simple collaborative publishing flow with three agents:

- coordinator agent delegates reading and synthesis

- synthesizer reads multiple input files and returns merged HTML content

- writer writes the final output file

Project 2: Live Fact Sheet

A structured extraction workflow:

- router agent delegates extraction

- extractor reads plain-text notes and returns a markdown fact sheet

- writer writes the markdown output

Project 3: Live Reviewed Merge

Showing that review is a workflow choice rather than a built-in AOS rule:

- lead agent delegates merge work

- merger agent reads source files and produces final HTML

- writer agent writes the output file

- review partner reads the produced output and returns a review note

- lead agent decides how to finish using that review note

Each live project produces:

- final output file

- workflow state directory for inspection

- completion report

Current Status

- Research prototype, not a polished product release

- Core Go packages test cleanly

- Active areas include workflow tooling, skills, local TUI ergonomics, and evaluation/demo workflows

- Uses backend-agnostic env vars for live model selection:

AOS_LLM_BACKEND,AOS_LLM_BASE_URL,AOS_LLM_MODEL,AOS_LLM_API_KEY

Try It Out

# Run tests

make test

# Run the CLI

go run ./cmd/aos

# Run the TUI

go run ./cmd/aos-tui --workdir .

# Run a workflow with local vllm

AOS_LLM_BACKEND=ollama \

AOS_LLM_BASE_URL=http://localhost:11434 \

AOS_LLM_MODEL=qwen3.6:35b \

go run ./cmd/aos run --workflow ./workflows/rasterizer-optimized-tail.jsonRelated Work

This design draws inspiration from:

- Ralph - fresh-context autonomous coding loop with explicit memory artifacts

- Anthropic's harness engineering principles - planner/generator/evaluator separation, external verification, simplicity through measured need

The key insight: every harness component encodes an assumption about model weakness. Each major AOS feature should answer:

- what failure mode does this address?

- how do we know it helps?

- when should it be removed or simplified?

What's Next

The roadmap includes:

- Workflow specs - declarative workflow definitions with validation

- Multi-round agent runtime - agents can emit messages, use tools, delegate to other agents, continue after results return

- Inspectable reports - HTML reports showing processes, artifacts, events, decisions, issues, and tests

- Recovery and resume - interrupted workflows can be resumed from durable state

The Non-Negotiables

If I had to reduce this to a few rules:

- State lives outside the model.

- Tasks must be small, explicit, and verifiable.

- Workers do not verify themselves.

- Artifacts and event logs are first-class system outputs.

- Most runtime behavior must be testable without a live LLM.

- Any harness complexity must justify itself with measured improvement.

Featured Example: Rasterizer Optimization Team

A core example of AOS in action is a four-agent team tasked with optimizing a C++ sphere rasterizer. This demonstrates what multi-agent collaboration looks like when agents share artifacts and protocols rather than context windows.

The Setup

AOS orchestrates four specialized agents through an IPC bus:

| Agent | Role |

|---|---|

| Coordinator | Owns the experiment loop, maintains a disciplined log, decides when to iterate |

| Writer | Writes and optimizes C++17 rasterizer code |

| Benchmarker | Compiles with g++ -O2 -std=c++17, runs timing trials, collects profiling data |

| Verifier | Validates correctness with pixel-wise comparisons across multiple scene configs |

The Workflow

Phase 1: Baseline

- Writer creates a correct sphere rasterizer at 640x480

- Benchmarker compiles and times baseline performance

- Verifier checks pixel correctness across multiple scene configurations

Phase 2: Optimization Loop

- Writer proposes optimizations, saving versioned candidates (

optimized_rasterizer_round_01.cpp, etc.) - Benchmarker runs 5+ timed trials, uses profiling tools to identify hotspots

- Verifier performs pixel-wise comparisons against reference implementations

- Coordinator logs each round, tracks approaches attempted, decides next strategy

- Loop continues until 10x speedup target achieved or real blockers emerge

Actual Techniques Discovered

The AOS multi-agent team discovered these optimizations through iteration:

- Multi-threading (horizontal strips) - Parallel rendering across 4 threads

- Static arrays - Removed

std::vectoroverhead - Precomputed values - Calculated

1/aoutside loops - Function inlining and

__restricthints - Compiler optimization guidance - Safe precomputations - Precomputed

oc(origin-center) vectors

What It Proves

This workflow demonstrates:

- Delegation - Agents delegate work to specialized peers via

spawn_agent - Artifact handoffs - Each agent reads/writes structured artifacts (benchmark JSON, logs, PPM images)

- Persistent memory - Coordinator log tracks experiment history across rounds

- External verification - Pixel-wise checks, not self-checks

- Inspectable state - All artifacts preserved for review

- Correctness + performance - Both verification and benchmarking required

Optimization Trajectory

Here's an interactive visualization of the actual optimization experiment. The data was extracted from kernel event logs:

The Full Story: Rounds 4-14

Round 4: Fixed Monochrome Output

After discovering the discriminant formula was wrong (b*b - 4*c instead of b*b - 4*a*c), the writer corrected it. The rasterizer now shows proper color variation with rendered times around 14ms.

Rounds 5: Safety First

The agent produced a version that matched the baseline output exactly—99.5% black with 1,687 colored pixels. Performance: 15.103ms (1.41x speedup). The team was now on the right track: correct, but not yet fast.

Rounds 6-8: The Fast-but-Wrong Phase

The writer made bold optimizations that slashed runtime to 5-7ms but broke correctness:

- Round 6: 6.688ms (3.18x) — but produced 275,939 non-black pixels instead of 1,687

- Round 7: 6.966ms (3.04x) — same wrong pixel count

- Round 8: 5.267ms (4.04x) — fastest yet, but still wrong

The verifier caught all of them. The team had speed, but lost the image.

Round 10: Too Fast, Too Wrong

By Round 10, the agents discovered even more aggressive optimizations:

- Timing: 3.424ms (6.2x speedup!)

- Pixel count: 34,307 non-black (still wrong)

- Expected: ~1,687 non-black pixels

- Result: The verifier caught it again.

Round 11-12: Failed Unrolling

- Strategy: Unroll sphere loop, precompute constants

- Result: 5.267ms, WRONG pixels again

- The verifier prevented acceptance

Round 13: Pivot to Safety

The coordinator realized: the fastest optimizations weren't worth it. The pivot to safe optimizations:

- Strategy: Precomputed

oc(origin-center), static arrays - Result: 6.115ms (3.48x), CORRECT with 275,652 pixels (the right count for the scene)

- Lesson: Don't build on wrong code, even if it's fast

Round 14: The Winning Formula

Building on the correct foundation with targeted performance improvements:

- Multi-threading (4 threads, horizontal strips)

- Removed

std::vector(raw arrays) - Precomputed

1/a(avoided divisions in loops) - Function inlining and

__restricthints - Result: 1.97ms — 10.8x speedup ✓ TARGET ACHIEVED

Each round builds on the previous one. Failed ideas are remembered. The verifier ensures correctness is maintained throughout.

What Each Technique Brings

| Round | Technique | What Changed | Result |

|---|---|---|---|

| Baseline | Default | C++ with sphere loop | 21.285ms |

| Round 4 | Fixed discriminant | b*b - 4*a*c formula |

14.4ms |

| Round 5 | Safe baseline match | Verified 1,687 colored pixels | 15.1ms (1.41x) |

| Rounds 6-8 | Precomputation | Precomputed values, removed vectors | 5.3-6.7ms — WRONG |

| Round 10 | Aggressive opts | Faster but 34K pixels wrong | 3.42ms (6.2x) WRONG |

| Round 13 | Safe precomputation | Precomputed oc, static arrays |

6.115ms (3.48x) CORRECT |

| Round 14 | Multi-threading | 4 threads, no vector, 1/a precomputed |

1.97ms (10.8x) ✓ |

Critical insight from Rounds 6-10: The agents found optimizations that were faster but produced wrong pixel counts (275K vs expected 1.7K or 34K). The verifier system worked — it caught all of them. Only after Round 13, when the team pivoted back to safety, did they discover that building on correct code is essential.

What made Round 14 succeed: The winning formula combined:

- Multi-threading (horizontal strip parallelization)

- Raw arrays (removed

std::vectoroverhead) - Precomputed

1/a(avoided divisions in loops) - Function inlining and

__restricthints for the compiler

Why This Matters

This is what multi-agent collaboration looks like when it's not just prompt-based:

| Prompt-Based | AOS Runtime |

|---|---|

| Shared context window | Explicit artifacts and IPC |

| Implicit coordination | Delegation via spawn_agent |

| Self-verification | External pixel-wise checks |

| One-shot attempts | Iterative experiment loop |

| Hard to audit | Full event log and state preserved |

The full code and documentation is at github.com/phg1024/aos.